The problem: The stats lab I had inherited relied heavily on a copy/paste method of teaching. Students A) didn’t like that very much and B) weren’t learning to troubleshoot when there were mistakes in the code. Instead, when they ran it and it didn’t work, instead of trying to use the error messages provided by the statistics program, they would automatically raise their hand and wait for me to come rescue them.

The innovation: I created a series of exercises that contained intentionally bad code. These were given to students at the beginning of class, covering what we learned during the last session. Their task was to fix what was broken and get the code to run. They were also asked to report results appropriately once they had successfully achieved them. In Fall 2014, students received these exercises for half the topics in the course. In Fall 2015, they received an exercise at the start of every class.

The assessment: Students rated their confidence for conducting, troubleshooting, reporting, and creating a variety of statistical analyses covered in the course on a scale from 1 (no confidence or experience) to 5 (full confidence/mastery) during both the first and last class of the semester. During the last class they were also asked to rank various instructional methods, including the Make it Work! exercises from most to least helpful (on a scale of 7) and were given a chance to provide open-ended feedback.

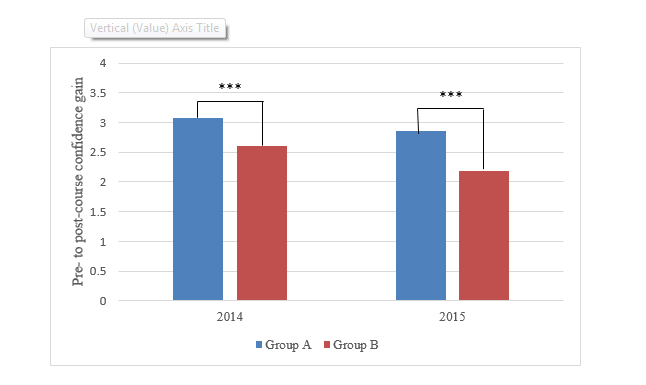

The results: In Fall 2014, students gained more confidence when they had a Make it Work! exercise for a topic then when they didn’t.

However, in 2015, students still reported more confidence gain for the same topics (Group A) as they had in 2014, compared to the others (Group B). This suggested that factors other than the Make it Work! exercises may have contributed to the confidence gains. I suspected that perhaps some practice effects may have been at work, so I compared confidence gain for practiced vs. unpracticed effects. In this case, practiced skills were those that students did time and time again in the statistics lab. So, for example, things like having to read a data set into the SAS program or run tests of normality would be considered practiced skills, because one has to do both of those before running any other statistical analysis. As it happened, the course topics that had a Make it Work! exercise assigned in both 2014 and 2015 most often happened to be practiced skills while topics that only had a Make it Work! assigned in 2015 most often happened to be unpracticed skills.

Strangely, 2014 students didn’t report more confidence gain for practiced vs. unpracticed effects, but 2015 students did. I think that, in 2014, when students only had a few Make it Work! exercises as alternatives to traditional instruction, the Make it Work! did help raise confidence. However, in 2015, when Make it Work! exercises were the classroom norm, greater confidence was gained for the skills that got more exposure and practice on top of having an exercise. Therefore, neither targeted, hands-on activities like the Make it Work! exercises, nor repeated exposure to material alone is sufficient for the greatest gains in student confidence. Rather, both should be used in combination for the truest benefit of students.

It will be valuable to understand the complexity of this relationships, gains in student confidence were associated with better grades in the statistics lab. And, regardless of the quantitative results, students in the lab had a positive impression of the exercises. Of 7 components of the course, the median ranking for the Make it Work! exercises was 2nd or 3rd most helpful, behind being provided example code and the course assignments. Students also had overwhelmingly positive things to say:

“I found them helpful because they featured common mistakes.”

“Helpful–hands on experience with the code is always good.”

“I like them. I thought they were very helpful in understanding the syntax via troubleshooting. I just think doing more of them would be better.” [Fall 2014; emphasis added]

Please feel free to contact me if you would like examples of the Make it Work! exercises

(This project was completed in part to complete requirements of the CIRTL Scholar through CIRTL@UAB.)